cryptography

What is cryptography?

Cryptography is a method of protecting information and communications using codes, so that only those for whom the information is intended can read and process it.

In computer science, cryptography refers to secure information and communication techniques derived from mathematical concepts and a set of rule-based calculations called algorithms, to transform messages in ways that are hard to decipher. These deterministic algorithms are used for cryptographic key generation, digital signing, verification to protect data privacy, web browsing on the internet and confidential communications such as credit card transactions and email.

Cryptography techniques

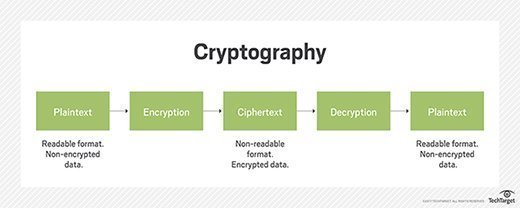

Cryptography is closely related to the disciplines of cryptology and cryptanalysis. It includes techniques such as microdots, merging words with images and other ways to hide information in storage or transit. In today's computer-centric world, cryptography is most often associated with scrambling plaintext (ordinary text, sometimes referred to as cleartext) into ciphertext (a process called encryption), then back again (known as decryption). Individuals who practice this field are known as cryptographers.

Modern cryptography concerns itself with the following four objectives:

- Confidentiality. The information cannot be understood by anyone for whom it was unintended.

- Integrity. The information cannot be altered in storage or transit between sender and intended receiver without the alteration being detected.

- Non-repudiation. The creator/sender of the information cannot deny at a later stage their intentions in the creation or transmission of the information.

- Authentication. The sender and receiver can confirm each other's identity and the origin/destination of the information.

Procedures and protocols that meet some or all the above criteria are known as cryptosystems. Cryptosystems are often thought to refer only to mathematical procedures and computer programs; however, they also include the regulation of human behavior, such as choosing hard-to-guess passwords, logging off unused systems and not discussing sensitive procedures with outsiders.

Cryptographic algorithms

Cryptosystems use a set of procedures known as cryptographic algorithms, or ciphers, to encrypt and decrypt messages to secure communications among computer systems, devices and applications.

A cipher suite uses one algorithm for encryption, another algorithm for message authentication and another for key exchange. This process, embedded in protocols and written in software that runs on operating systems (OSes) and networked computer systems, involves the following:

- Public and private key generation for data encryption/decryption.

- Digital signing and verification for message authentication.

- Key exchange.

Types of cryptography

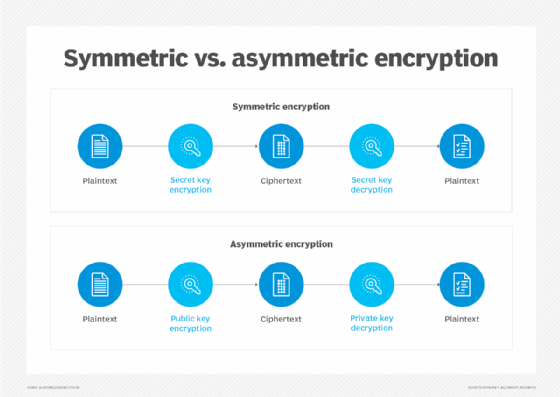

Single-key or symmetric-key encryption algorithms create a fixed length of bits known as a block cipher with a secret key that the creator/sender uses to encipher data (encryption) and the receiver uses to decipher it. One example of symmetric-key cryptography is the Advanced Encryption Standard (AES). AES is a specification established in November 2001 by the National Institute of Standards and Technology (NIST) as a Federal Information Processing Standard (FIPS 197) to protect sensitive information. The standard is mandated by the U.S. government and widely used in the private sector.

In June 2003, AES was approved by the U.S. government for classified information. It is a royalty-free specification implemented in software and hardware worldwide. AES is the successor to the Data Encryption Standard (DES) and DES3. It uses longer key lengths -- 128-bit, 192-bit, 256-bit -- to prevent brute-force and other attacks.

Public-key or asymmetric-key encryption algorithms use a pair of keys, a public key associated with the creator/sender for encrypting messages and a private key that only the originator knows (unless it is exposed or they decide to share it) for decrypting that information.

Examples of public-key cryptography include the following:

- RSA (Rivest-Shamir-Adleman), used widely on the internet.

- Elliptic Curve Digital Signature Algorithm (ECDSA) used by Bitcoin.

- Digital Signature Algorithm (DSA) adopted as a standard for digital signatures by NIST in FIPS 186-4.

- Diffie-Hellman key exchange.

To maintain data integrity in cryptography, hash functions, which return a deterministic output from an input value, are used to map data to a fixed data size. Types of cryptographic hash functions include SHA-1 (Secure Hash Algorithm 1), SHA-2 and SHA-3.

Cryptography concerns

Attackers can bypass cryptography, hack into computers responsible for data encryption and decryption, and exploit weak implementations, such as the use of default keys. Cryptography makes it harder for attackers to access messages and data protected by encryption algorithms.

Growing concerns about the processing power of quantum computing to break current cryptography encryption standards led NIST to put out a call for papers among the mathematical and science community in 2016 for new public key cryptography standards. NIST announced it will have three quantum-resistant cryptographic algorithms ready for use in 2024.

Unlike today's computer systems, quantum computing uses quantum bits (qubits) that can represent both 0s and 1s, and therefore perform two calculations at once. While a large-scale quantum computer might not be built in the next decade, the existing infrastructure requires standardization of publicly known and understood algorithms that offer a secure approach, according to NIST.

History of cryptography

The word "cryptography" is derived from the Greek kryptos, meaning hidden.

The prefix "crypt-" means "hidden" or "vault," and the suffix "-graphy" stands for "writing."

The origin of cryptography is usually dated from about 2000 B.C., with the Egyptian practice of hieroglyphics. These consisted of complex pictograms, the full meaning of which was only known to an elite few.

The first known use of a modern cipher was by Julius Caesar (100 B.C. to 44 B.C.), who did not trust his messengers when communicating with his governors and officers. For this reason, he created a system in which each character in his messages was replaced by a character three positions ahead of it in the Roman alphabet.

In recent times, cryptography has turned into a battleground of some of the world's best mathematicians and computer scientists. The ability to securely store and transfer sensitive information has proved a critical factor in success in war and business.

Because governments do not want certain entities in and out of their countries to have access to ways to receive and send hidden information that might be a threat to national interests, cryptography has been subject to various restrictions in many countries, ranging from limitations of the usage and export of software to the public dissemination of mathematical concepts that could be used to develop cryptosystems.

The internet has allowed the spread of powerful programs, however, and more importantly, the underlying techniques of cryptography, so that today many of the most advanced cryptosystems and ideas are now in the public domain.